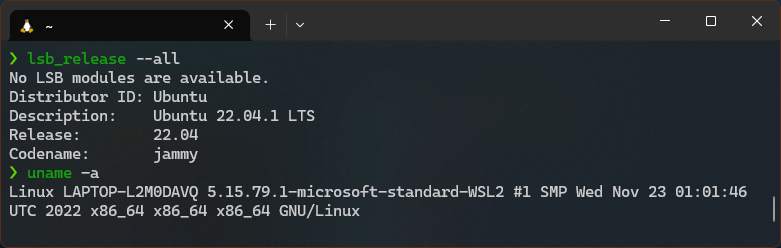

If you’re a Windows user and a Linux enthusiast, you’re probably familiar with Windows Subsystem for Linux (WSL). WSL is a feature in Windows 10 that allows you to run Linux command-line tools and utilities directly on Windows, without the need for a virtual machine or dual-booting. One of the great things about WSL is...